The Best Design Tool I Used This Year Was a Conversation

Using AI as a thinking partner to design a new kind of social audiobook app

I was preparing for an interview with an audiobook company earlier this year. Standard research — competitive landscape, user reviews, product gaps. I expected to spend a few hours on it and move on.

I didn't move on.

The more I looked at how people actually experience audiobooks, the more one thing kept surfacing. Reading and listening are fundamentally solitary activities, and that solitude is part of the problem. Digital life has made sustained attention harder. People start books and abandon them. Not because the books aren't good. Because there's nothing pulling them back. No commitment, no community, no one to disappoint.

The Silent Book Club phenomenon pointed at something real. People show up to a coffee shop or bar, order a drink, and read alongside strangers for two hours. They finish more books. The social context creates the accountability that the solo experience can't. Nobody is talking. Just being around other readers is enough.

Audiobook apps haven't touched this. There are tens of millions of listeners and none of them can see what the person next to them is reading. That felt like a significant gap.

So I started building.

The concept

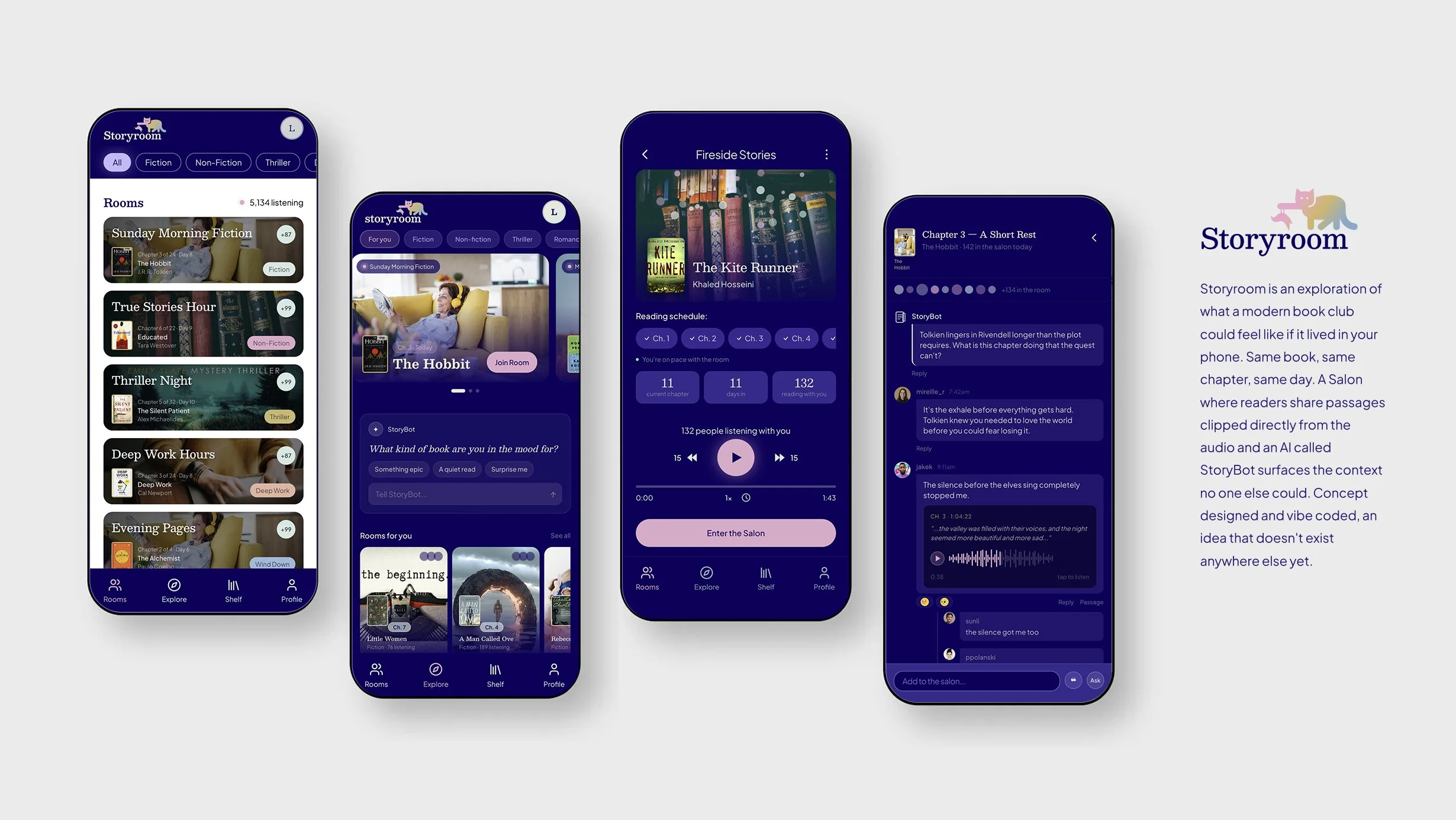

The core idea became Storyroom. Same book, same chapter, same day. A virtual room where people listen on their own schedule but share a pace. At the end of each chapter they gather in a Salon to talk about what they heard — sharing passages clipped directly from the audio, responding to each other, building a conversation around the book they just experienced together.

The second idea was the one I found more interesting. I wanted to know if an AI agent could genuinely extend the value of a book for readers rather than just summarizing it. Not a chatbot. A participant. Something that notices the passage nobody mentioned, surfaces the context only it would know, asks the question that opens the room up rather than closes it down. I called it StoryBot.

StoryBot has three roles. A coach that nudges you toward the day's chapter. A companion that answers questions while you listen. A host that shows up in the Salon with a question worth discussing and steps back when the conversation finds its own momentum. The question I was genuinely curious about: can AI moderate a book club well? I still don't have a complete answer, but the design of the attempt taught me a lot.

Storyroom Demo Video

How it got built

The process was unlike anything I'd done before and I want to be honest about what that felt like.

It started with conversation. Not wireframes, not a brief. I spent several sessions talking through the concept with Claude, the AI, diverging on possibilities for what a social audiobook experience could be, then converging on what was actually worth building. The app name came out of that conversation. So did the product's core logic. I was using the LLM as a thinking partner before I touched any tool.

That was new for me. I've always started in Figma. The tension of where to start is one of the genuinely unresolved questions about working this way. Going straight to code means you're testing real interactions faster. Starting in Figma means you're thinking visually before you're committed to anything. I ended up doing both, in sequence, which turned out to be the right call for this project.

Figma Make gave me rough screens fast. Atmospheric room cards, the Salon feed, the presence orbs that signal other listeners nearby. Fast enough to pressure-test the concept without spending days on it. Then I moved those rough designs into Figma proper to rework them with more control, build a real component library, set up a variable system. That stage is where the design got precise.

From there I connected Figma to Claude Code via MCP integration and moved into the terminal. The prototype is a React and TypeScript app on GitHub. It simulates audiobook playback, Salon conversation, StoryBot responses, waveform passage sharing. Smoke and mirrors, but interactive and shareable and real enough to show people what the experience could feel like.

The meta layer is the part I keep thinking about. Throughout the build I used Claude in the browser to write better prompts for Claude Code in the terminal. The LLM helping me communicate more precisely with the LLM doing the building. It sounds circular. In practice it meant the builds came out right more often on the first pass because the prompts had the right level of detail. Describing UI states and behavior precisely is a design skill. It turned out to transfer directly.

What this means

I've been doing this long enough to have seen a few moments that felt like genuine shifts. Flash in the late nineties. The iPhone. Figma. This feels like one of those moments, and I'm trying to be honest about it rather than either dismissive or evangelical.

The good is real. Ideas that would have taken a team and a sprint cycle to test can now be tested in an afternoon. The gap between what a designer can conceive and what they can ship has compressed dramatically. For someone with 20 years of design experience and a strong point of view, that compression is almost purely a gift. The vision gets out of your head faster. You can hold more of the product in your mind because you're not waiting on others to build what you're imagining.

The craft question is worth taking seriously. There are things you learn from drawing that you don't learn from describing. The muscle memory of pushing pixels, the way your eye develops by solving layout problems slowly, the understanding of engineering constraints that comes from watching engineers work. I worry that designers who skip that foundation will have a harder time knowing when the output is wrong. Knowing when to push back on what the AI produced requires taste, and taste takes time to develop.

But the opportunity is larger than the risk. Designers have always been translators, taking a fuzzy human need and giving it a precise shape. That skill is more valuable now, not less. The tools have changed. The job of caring about the human on the other side hasn't.

Storyroom is a concept. It might become a real product. It might stay a prototype that demonstrated something worth demonstrating. Either way the research question that started it still feels important to me: reading is getting harder, the social layer is missing, and AI might be able to make book clubs work at a scale that the Wednesday night Zoom call never could.

I built it to find out. The finding out was the point.

Try the Storyroom prototype: https://lanceshields69.github.io/storyroom/